This text discusses a brand new launch of a multimodal Hunyuan Video world mannequin referred to as ‘HunyuanCustom’. The brand new paper’s breadth of protection, mixed with a number of points in most of the equipped instance movies on the challenge web page*, constrains us to extra basic protection than typical, and to restricted replica of the large quantity of video materials accompanying this launch (since most of the movies require vital re-editing and processing in an effort to enhance the readability of the format).

Please notice moreover that the paper refers back to the API-based generative system Kling as ‘Keling’. For readability, I seek advice from ‘Kling’ as an alternative all through.

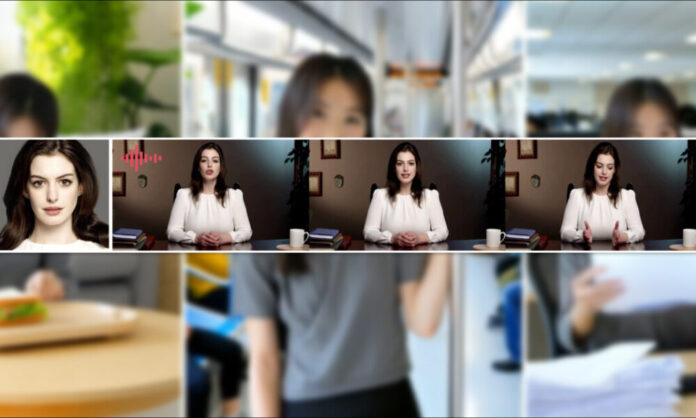

Tencent is within the means of releasing a brand new model of its Hunyuan Video mannequin, titled HunyuanCustom. The brand new launch is outwardly able to making Hunyuan LoRA fashions redundant, by permitting the person to create ‘deepfake’-style video customization by a single picture:

Click on to play. Immediate: ‘A person is listening to music and cooking snail noodles within the kitchen’. The brand new methodology in comparison with each close-source and open-source strategies, together with Kling, which is a big opponent on this area. Supply: https://hunyuancustom.github.io/ (warning: CPU/memory-intensive website!)

Within the left-most column of the video above, we see the only supply picture equipped to HunyuanCustom, adopted by the brand new system’s interpretation of the immediate within the second column, subsequent to it. The remaining columns present the outcomes from varied proprietary and FOSS programs: Kling; Vidu; Pika; Hailuo; and the Wan-based SkyReels-A2.

Within the video beneath, we see renders of three eventualities important to this launch: respectively, particular person + object; single-character emulation; and digital try-on (particular person + garments):

Click on to play. Three examples edited from the fabric on the supporting website for Hunyuan Video.

We are able to discover a couple of issues from these examples, principally associated to the system counting on a single supply picture, as an alternative of a number of photos of the identical topic.

Within the first clip, the person is actually nonetheless dealing with the digital camera. He dips his head down and sideways at not rather more than 20-25 levels of rotation, however, at an inclination in extra of that, the system would actually have to start out guessing what he appears like in profile. That is onerous, most likely unimaginable to gauge precisely from a sole frontal picture.

Within the second instance, we see that the little woman is smiling within the rendered video as she is within the single static supply picture. Once more, with this sole picture as reference, the HunyuanCustom must make a comparatively uninformed guess about what her ‘resting face’ appears like. Moreover, her face doesn’t deviate from camera-facing stance by greater than the prior instance (‘man consuming crisps’).

Within the final instance, we see that for the reason that supply materials – the lady and the garments she is prompted into sporting – will not be full photos, the render has cropped the state of affairs to suit – which is definitely quite a very good answer to an information challenge!

The purpose is that although the brand new system can deal with a number of photos (akin to particular person + crisps, or particular person + garments), it doesn’t apparently enable for a number of angles or various views of a single character, in order that numerous expressions or uncommon angles might be accommodated. To this extent, the system might subsequently battle to interchange the rising ecosystem of LoRA fashions which have sprung up round HunyuanVideo since its launch final December, since these will help HunyuanVideo to provide constant characters from any angle and with any facial features represented within the coaching dataset (20-60 photos is typical).

Wired for Sound

For audio, HunyuanCustom leverages the LatentSync system (notoriously onerous for hobbyists to arrange and get good outcomes from) for acquiring lip actions which might be matched to audio and textual content that the person provides:

Options audio. Click on to play. Numerous examples of lip-sync from the HunyuanCustom supplementary website, edited collectively.

On the time of writing, there aren’t any English-language examples, however these seem like quite good – the extra so if the tactic of making them is easily-installable and accessible.

Enhancing Current Video

The brand new system provides what seem like very spectacular outcomes for video-to-video (V2V, or Vid2Vid) modifying, whereby a phase of an present (actual) video is masked off and intelligently changed by a topic given in a single reference picture. Beneath is an instance from the supplementary supplies website:

Click on to play. Solely the central object is focused, however what stays round it additionally will get altered in a HunyuanCustom vid2vid cross.

As we will see, and as is commonplace in a vid2vid state of affairs, the whole video is to some extent altered by the method, although most altered within the focused area, i.e., the plush toy. Presumably pipelines might be developed to create such transformations below a rubbish matte method that leaves the vast majority of the video content material equivalent to the unique. That is what Adobe Firefly does below the hood, and does fairly nicely – however it’s an under-studied course of within the FOSS generative scene.

That stated, many of the various examples offered do a greater job of focusing on these integrations, as we will see within the assembled compilation beneath:

Click on to play. Numerous examples of interjected content material utilizing vid2vid in HunyuanCustom, exhibiting notable respect for the untargeted materials.

A New Begin?

This initiative is a improvement of the Hunyuan Video challenge, not a tough pivot away from that improvement stream. The challenge’s enhancements are launched as discrete architectural insertions quite than sweeping structural adjustments, aiming to permit the mannequin to take care of identification constancy throughout frames with out counting on subject-specific fine-tuning, as with LoRA or textual inversion approaches.

To be clear, subsequently, HunyuanCustom is just not skilled from scratch, however quite is a fine-tuning of the December 2024 HunyuanVideo basis mannequin.

Those that have developed HunyuanVideo LoRAs might marvel if they’ll nonetheless work with this new version, or whether or not they must reinvent the LoRA wheel but once more if they need extra customization capabilities than are constructed into this new launch.

On the whole, a closely fine-tuned launch of a hyperscale mannequin alters the mannequin weights sufficient that LoRAs made for the sooner mannequin is not going to work correctly, or in any respect, with the newly-refined mannequin.

Typically, nevertheless, a fine-tune’s recognition can problem its origins: one instance of a fine-tune changing into an efficient fork, with a devoted ecosystem and followers of its personal, is the Pony Diffusion tuning of Steady Diffusion XL (SDXL). Pony presently has 592,000+ downloads on the ever-changing CivitAI area, with an enormous vary of LoRAs which have used Pony (and never SDXL) as the bottom mannequin, and which require Pony at inference time.

Releasing

The challenge web page for the brand new paper (which is titled HunyuanCustom: A Multimodal-Pushed Structure for Personalized Video Technology) options hyperlinks to a GitHub website that, as I write, simply grew to become useful, and seems to comprise all code and vital weights for native implementation, along with a proposed timeline (the place the one essential factor but to come back is ComfyUI integration).

On the time of writing, the challenge’s Hugging Face presence continues to be a 404. There’s, nevertheless, an API-based model of the place one can apparently demo the system, as long as you possibly can present a WeChat scan code.

I’ve hardly ever seen such an elaborate and in depth utilization of such all kinds of tasks in a single meeting, as is clear in HunyuanCustom – and presumably a number of the licenses would in any case oblige a full launch.

Two fashions are introduced on the GitHub web page: a 720px1280px model requiring 8)GB of GPU Peak Reminiscence, and a 512px896px model requiring 60GB of GPU Peak Reminiscence.

The repository states ‘The minimal GPU reminiscence required is 24GB for 720px1280px129f however very sluggish…We advocate utilizing a GPU with 80GB of reminiscence for higher era high quality’ – and iterates that the system has solely been examined thus far on Linux.

The sooner Hunyuan Video mannequin has, since official launch, been quantized right down to sizes the place it may be run on lower than 24GB of VRAM, and it appears affordable to imagine that the brand new mannequin will likewise be tailored into extra consumer-friendly varieties by the group, and that it’ll rapidly be tailored to be used on Home windows programs too.

Attributable to time constraints and the overwhelming quantity of data accompanying this launch, we will solely take a broader, quite than in-depth take a look at this launch. Nonetheless, let’s pop the hood on HunyuanCustom somewhat.

A Have a look at the Paper

The info pipeline for HunyuanCustom, apparently compliant with the GDPR framework, incorporates each synthesized and open-source video datasets, together with OpenHumanVid, with eight core classes represented: people, animals, vegetation, landscapes, autos, objects, structure, and anime.

From the discharge paper, an summary of the various contributing packages within the HunyuanCustom knowledge development pipeline. Supply: https://arxiv.org/pdf/2505.04512

Preliminary filtering begins with PySceneDetect, which segments movies into single-shot clips. TextBPN-Plus-Plus is then used to take away movies containing extreme on-screen textual content, subtitles, watermarks, or logos.

To handle inconsistencies in decision and period, clips are standardized to 5 seconds in size and resized to 512 or 720 pixels on the quick facet. Aesthetic filtering is dealt with utilizing Koala-36M, with a customized threshold of 0.06 utilized for the customized dataset curated by the brand new paper’s researchers.

The topic extraction course of combines the Qwen7B Massive Language Mannequin (LLM), the YOLO11X object recognition framework, and the favored InsightFace structure, to establish and validate human identities.

For non-human topics, QwenVL and Grounded SAM 2 are used to extract related bounding packing containers, that are discarded if too small.

Examples of semantic segmentation with Grounded SAM 2, used within the Hunyuan Management challenge. Supply: https://github.com/IDEA-Analysis/Grounded-SAM-2

Multi-subject extraction makes use of Florence2 for bounding field annotation, and Grounded SAM 2 for segmentation, adopted by clustering and temporal segmentation of coaching frames.

The processed clips are additional enhanced through annotation, utilizing a proprietary structured-labeling system developed by the Hunyuan workforce, and which furnishes layered metadata akin to descriptions and digital camera movement cues.

Masks augmentation methods, together with conversion to bounding packing containers, have been utilized throughout coaching to cut back overfitting and make sure the mannequin adapts to numerous object shapes.

Audio knowledge was synchronized utilizing the aforementioned LatentSync, and clips discarded if synchronization scores fall beneath a minimal threshold.

The blind picture high quality evaluation framework HyperIQA was used to exclude movies scoring below 40 (on HyperIQA’s bespoke scale). Legitimate audio tracks have been then processed with Whisper to extract options for downstream duties.

The authors incorporate the LLaVA language assistant mannequin in the course of the annotation section, and so they emphasize the central place that this framework has in HunyuanCustom. LLaVA is used to generate picture captions and help in aligning visible content material with textual content prompts, supporting the development of a coherent coaching sign throughout modalities:

The HunyuanCustom framework helps identity-consistent video era conditioned on textual content, picture, audio, and video inputs.

By leveraging LLaVA’s vision-language alignment capabilities, the pipeline good points an extra layer of semantic consistency between visible components and their textual descriptions – particularly useful in multi-subject or complex-scene eventualities.

Customized Video

To permit video era based mostly on a reference picture and a immediate, the 2 modules centered round LLaVA have been created, first adapting the enter construction of HunyuanVideo in order that it might settle for a picture together with textual content.

This concerned formatting the immediate in a method that embeds the picture straight or tags it with a brief identification description. A separator token was used to cease the picture embedding from overwhelming the immediate content material.

Since LLaVA’s visible encoder tends to compress or discard fine-grained spatial particulars in the course of the alignment of picture and textual content options (significantly when translating a single reference picture right into a basic semantic embedding), an identification enhancement module was included. Since practically all video latent diffusion fashions have some issue sustaining an identification with out an LoRA, even in a five-second clip, the efficiency of this module in group testing might show vital.

In any case, the reference picture is then resized and encoded utilizing the causal 3D-VAE from the unique HunyuanVideo mannequin, and its latent inserted into the video latent throughout the temporal axis, with a spatial offset utilized to forestall the picture from being straight reproduced within the output, whereas nonetheless guiding era.

The mannequin was skilled utilizing Circulate Matching, with noise samples drawn from a logit-normal distribution – and the community was skilled to recuperate the right video from these noisy latents. LLaVA and the video generator have been each fine-tuned collectively in order that the picture and immediate might information the output extra fluently and hold the topic identification constant.

For multi-subject prompts, every image-text pair was embedded individually and assigned a definite temporal place, permitting identities to be distinguished, and supporting the era of scenes involving a number of interacting topics.

Sound and Imaginative and prescient

HunyuanCustom circumstances audio/speech era utilizing each user-input audio and a textual content immediate, permitting characters to talk inside scenes that mirror the described setting.

To assist this, an Id-disentangled AudioNet module introduces audio options with out disrupting the identification indicators embedded from the reference picture and immediate. These options are aligned with the compressed video timeline, divided into frame-level segments, and injected utilizing a spatial cross-attention mechanism that retains every body remoted, preserving topic consistency and avoiding temporal interference.

A second temporal injection module gives finer management over timing and movement, working in tandem with AudioNet, mapping audio options to particular areas of the latent sequence, and utilizing a Multi-Layer Perceptron (MLP) to transform them into token-wise movement offsets. This enables gestures and facial motion to comply with the rhythm and emphasis of the spoken enter with larger precision.

HunyuanCustom permits topics in present movies to be edited straight, changing or inserting individuals or objects right into a scene while not having to rebuild all the clip from scratch. This makes it helpful for duties that contain altering look or movement in a focused method.

Click on to play. An additional instance from the supplementary website.

To facilitate environment friendly subject-replacement in present movies, the brand new system avoids the resource-intensive method of current strategies such because the currently-popular VACE, or those who merge whole video sequences collectively, favoring as an alternative the compression of a reference video utilizing the pretrained causal 3D-VAE – aligning it with the era pipeline’s inner video latents, after which including the 2 collectively. This retains the method comparatively light-weight, whereas nonetheless permitting exterior video content material to information the output.

A small neural community handles the alignment between the clear enter video and the noisy latents utilized in era. The system exams two methods of injecting this info: merging the 2 units of options earlier than compressing them once more; and including the options body by body. The second methodology works higher, the authors discovered, and avoids high quality loss whereas maintaining the computational load unchanged.

Information and Exams

In exams, the metrics used have been: the identification consistency module in ArcFace, which extracts facial embeddings from each the reference picture and every body of the generated video, after which calculates the typical cosine similarity between them; topic similarity, through sending YOLO11x segments to Dino 2 for comparability; CLIP-B, text-video alignment, which measures similarity between the immediate and the generated video; CLIP-B once more, to calculate similarity between every body and each its neighboring frames and the primary body, in addition to temporal consistency; and dynamic diploma, as outlined by VBench.

As indicated earlier, the baseline closed supply rivals have been Hailuo; Vidu 2.0; Kling (1.6); and Pika. The competing FOSS frameworks have been VACE and SkyReels-A2.

Mannequin efficiency analysis evaluating HunyuanCustom with main video customization strategies throughout ID consistency (Face-Sim), topic similarity (DINO-Sim), text-video alignment (CLIP-B-T), temporal consistency (Temp-Consis), and movement depth (DD). Optimum and sub-optimal outcomes are proven in daring and underlined, respectively.

Of those outcomes, the authors state:

‘Our [HunyuanCustom] achieves one of the best ID consistency and topic consistency. It additionally achieves comparable leads to immediate following and temporal consistency. [Hailuo] has one of the best clip rating as a result of it may possibly comply with textual content directions nicely with solely ID consistency, sacrificing the consistency of non-human topics (the worst DINO-Sim). When it comes to Dynamic-degree, [Vidu] and [VACE] carry out poorly, which can be because of the small dimension of the mannequin.’

Although the challenge website is saturated with comparability movies (the format of which appears to have been designed for web site aesthetics quite than simple comparability), it doesn’t presently characteristic a video equal of the static outcomes crammed collectively within the PDF, in regard to the preliminary qualitative exams. Although I embody it right here, I encourage the reader to make an in depth examination of the movies on the challenge website, as they offer a greater impression of the outcomes:

From the paper, a comparability on object-centered video customization. Although the viewer ought to (as all the time) seek advice from the supply PDF for higher decision, the movies on the challenge website is perhaps a extra illuminating useful resource on this case.

The authors remark right here:

‘It may be seen that [Vidu], [Skyreels A2] and our methodology obtain comparatively good leads to immediate alignment and topic consistency, however our video high quality is best than Vidu and Skyreels, due to the great video era efficiency of our base mannequin, i.e., [Hunyuanvideo-13B].

‘Amongst industrial merchandise, though [Kling] has a very good video high quality, the primary body of the video has a copy-paste [problem], and generally the topic strikes too quick and [blurs], main a poor viewing expertise.’

The authors additional remark that Pika performs poorly when it comes to temporal consistency, introducing subtitle artifacts (results from poor knowledge curation, the place textual content components in video clips have been allowed to pollute the core ideas).

Hailuo maintains facial identification, they state, however fails to protect full-body consistency. Amongst open-source strategies, VACE, the researchers assert, is unable to take care of identification consistency, whereas they contend that HunyuanCustom produces movies with robust identification preservation, whereas retaining high quality and variety.

Subsequent, exams have been carried out for multi-subject video customization, in opposition to the identical contenders. As within the earlier instance, the flattened PDF outcomes will not be print equivalents of movies accessible on the challenge website, however are distinctive among the many outcomes introduced:

Comparisons utilizing multi-subject video customizations. Please see PDF for higher element and backbone.

The paper states:

‘[Pika] can generate the desired topics however displays instability in video frames, with cases of a person disappearing in a single state of affairs and a girl failing to open a door as prompted. [Vidu] and [VACE] partially seize human identification however lose vital particulars of non-human objects, indicating a limitation in representing non-human topics.

‘[SkyReels A2] experiences extreme body instability, with noticeable adjustments in chips and quite a few artifacts in the proper state of affairs.

‘In distinction, our HunyuanCustom successfully captures each human and non-human topic identities, generates movies that adhere to the given prompts, and maintains excessive visible high quality and stability.’

An additional experiment was ‘digital human commercial’, whereby the frameworks have been tasked to combine a product with an individual:

From the qualitative testing spherical, examples of neural ‘product placement’. Please see PDF for higher element and backbone.

For this spherical, the authors state:

‘The [results] exhibit that HunyuanCustom successfully maintains the identification of the human whereas preserving the main points of the goal product, together with the textual content on it.

‘Moreover, the interplay between the human and the product seems pure, and the video adheres carefully to the given immediate, highlighting the substantial potential of HunyuanCustom in producing commercial movies.’

One space the place video outcomes would have been very helpful was the qualitative spherical for audio-driven topic customization, the place the character speaks the corresponding audio from a text-described scene and posture.

Partial outcomes given for the audio spherical – although video outcomes might need been preferable on this case. Solely the highest half of the PDF determine is reproduced right here, as it’s giant and onerous to accommodate on this article. Please seek advice from supply PDF for higher element and backbone.

The authors assert:

‘Earlier audio-driven human animation strategies enter a human picture and an audio, the place the human posture, apparel, and atmosphere stay according to the given picture and can’t generate movies in different gesture and atmosphere, which can [restrict] their software.

‘…[Our] HunyuanCustom allows audio-driven human customization, the place the character speaks the corresponding audio in a text-described scene and posture, permitting for extra versatile and controllable audio-driven human animation.’

Additional exams (please see PDF for all particulars) included a spherical pitting the brand new system in opposition to VACE and Kling 1.6 for video topic substitute:

Testing topic substitute in video-to-video mode. Please seek advice from supply PDF for higher element and backbone.

Of those, the final exams introduced within the new paper, the researchers opine:

‘VACE suffers from boundary artifacts on account of strict adherence to the enter masks, leading to unnatural topic shapes and disrupted movement continuity. [Kling], in distinction, displays a copy-paste impact, the place topics are straight overlaid onto the video, resulting in poor integration with the background.

‘Compared, HunyuanCustom successfully avoids boundary artifacts, achieves seamless integration with the video background, and maintains robust identification preservation—demonstrating its superior efficiency in video modifying duties.’

Conclusion

This can be a fascinating launch, not least as a result of it addresses one thing that the ever-discontent hobbyist scene has been complaining about extra currently – the dearth of lip-sync, in order that the elevated realism succesful in programs akin to Hunyuan Video and Wan 2.1 is perhaps given a brand new dimension of authenticity.

Although the format of practically all of the comparative video examples on the challenge website makes it quite tough to match HunyuanCustom’s capabilities in opposition to prior contenders, it have to be famous that very, only a few tasks within the video synthesis area have the braveness to pit themselves in exams in opposition to Kling, the industrial video diffusion API which is all the time hovering at or close to the highest of the leader-boards; Tencent seems to have made headway in opposition to this incumbent in a quite spectacular method.

* The problem being that a number of the movies are so huge, quick, and high-resolution that they won’t play in commonplace video gamers akin to VLC or Home windows Media Participant, displaying black screens.

First revealed Thursday, Might 8, 2025