After testing the varied fashions in Google’s new Gemini 2.0 household, one thing attention-grabbing turns into clear: Google is exploring the potential of specialised AI methods working in live performance much like OpenAI.

Google has structured their AI choices round sensible use instances – from fast response methods to deep reasoning engines. Every mannequin serves a particular objective, and collectively they kind a complete toolkit for various AI duties.

What stands out is the design behind every mannequin’s capabilities. Flash processes huge contexts, Professional handles complicated coding duties, and Flash Considering brings a structured method to problem-solving.

Google’s improvement of Gemini 2.0 displays a cautious consideration of how AI methods are literally utilized in observe. Whereas their earlier approaches centered on general-purpose fashions, this launch exhibits a shift towards specialization.

This multi-model technique is smart if you have a look at how AI is being deployed throughout completely different situations:

- Some duties want fast, environment friendly responses

- Others require deep evaluation and complicated reasoning

- Many functions are cost-sensitive and want environment friendly processing

- Builders usually want specialised capabilities for particular use instances

Every mannequin has clear strengths and use instances, making it simpler to decide on the proper instrument for particular duties. It is not revolutionary, however it’s sensible and well-thought-out.

Breaking Down the Gemini 2.0 Fashions

If you first have a look at Google’s Gemini 2.0 lineup, it would look like simply one other set of AI fashions. However spending time understanding every one reveals one thing extra attention-grabbing: a fastidiously deliberate ecosystem the place every mannequin fills a particular position.

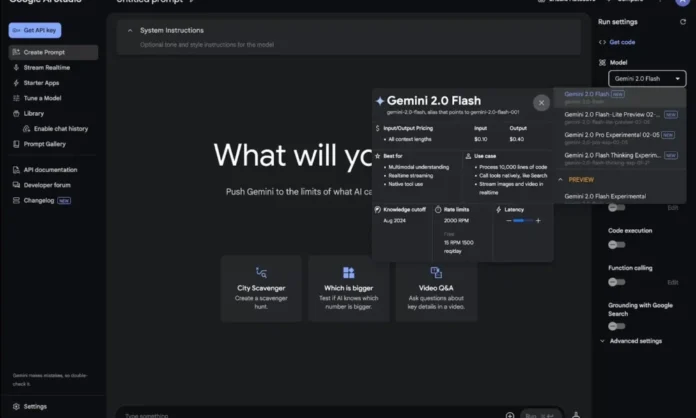

1. Gemini 2.0 Flash

Flash is Google’s reply to a basic AI problem: how do you stability velocity with functionality? Whereas most AI firms push for larger fashions, Google took a distinct path with Flash.

Flash brings three key improvements:

- An enormous 1M token context window that may deal with total paperwork

- Optimized response latency for real-time functions

- Deep integration with Google’s broader ecosystem

However what actually issues is how this interprets to sensible use.

Flash excels at:

Doc Processing

- Handles multi-page paperwork with out breaking context

- Maintains coherent understanding throughout lengthy conversations

- Processes structured and unstructured knowledge effectively

API Integration

- Constant response instances make it dependable for manufacturing methods

- Scales effectively for high-volume functions

- Helps each easy queries and complicated processing duties

Limitations to Think about

- Not optimized for specialised duties like superior coding

- Trades some accuracy for velocity in complicated reasoning duties

- Context window, whereas giant, nonetheless has sensible limits

The combination with Google’s ecosystem deserves particular consideration. Flash is designed to work seamlessly with Google Cloud companies, making it significantly precious for enterprises already within the Google ecosystem.

2. Gemini 2.0 Flash-Lite

Flash-Lite may be probably the most pragmatic mannequin within the Gemini 2.0 household. As a substitute of chasing most efficiency, Google centered on one thing extra sensible: making AI accessible and inexpensive at scale.

Let’s break down the economics:

- Enter tokens: $0.075 per million

- Output tokens: $0.30 per million

This an enormous discount in the associated fee barrier for AI implementation. However the actual story is what Flash-Lite maintains regardless of its effectivity focus:

Core Capabilities

- Close to-Flash degree efficiency on most common duties

- Full 1M token context window

- Multimodal enter assist

Flash-Lite is not simply cheaper – it is optimized for particular use instances the place value per operation issues greater than uncooked efficiency:

- Excessive-volume textual content processing

- Customer support functions

- Content material moderation methods

- Instructional instruments

3. Gemini 2.0 Professional (Experimental)

Right here is the place issues get attention-grabbing within the Gemini 2.0 household. Gemini 2.0 Professional is Google’s imaginative and prescient of what AI can do if you take away typical constraints. The experimental label is essential although – it indicators that Google continues to be discovering the candy spot between functionality and reliability.

The doubled context window issues greater than you may suppose. At 2M tokens, Professional can course of:

- A number of full-length technical paperwork concurrently

- Whole codebases with their documentation

- Lengthy-running conversations with full context

However uncooked capability is not the complete story. Professional’s structure is constructed for deeper AI considering and understanding.

Professional exhibits explicit power in areas requiring deep evaluation:

- Complicated downside decomposition

- Multi-step logical reasoning

- Nuanced sample recognition

Google particularly optimized Professional for software program improvement:

- Understands complicated system architectures

- Handles multi-file tasks coherently

- Maintains constant coding patterns throughout giant tasks

The mannequin is especially fitted to business-critical duties:

- Massive-scale knowledge evaluation

- Complicated doc processing

- Superior automation workflows

4. Gemini 2.0 Flash Considering

Gemini 2.0 Flash Considering may be probably the most intriguing addition to the Gemini household. Whereas different fashions concentrate on fast solutions, Flash Considering does one thing completely different – it exhibits its work. This transparency helps allow higher human-AI collaboration.

The mannequin breaks down complicated issues into digestible items:

- Clearly states assumptions

- Exhibits logical development

- Identifies potential various approaches

What units Flash Considering aside is its capacity to faucet into Google’s ecosystem:

- Actual-time knowledge from Google Search

- Location consciousness by way of Maps

- Multimedia context from YouTube

- Device integration for dwell knowledge processing

Flash Considering finds its area of interest in situations the place understanding the method issues:

- Instructional contexts

- Complicated decision-making

- Technical troubleshooting

- Analysis and evaluation

The experimental nature of Flash Considering hints at Google’s broader imaginative and prescient of extra refined reasoning capabilities and deeper integration with exterior instruments.

(Google DeepMind)

Technical Infrastructure and Integration

Getting Gemini 2.0 working in manufacturing requires an understanding how these items match collectively in Google’s broader ecosystem. Success with integration usually relies on how effectively you map your must Google’s infrastructure.

The API layer serves as your entry level, providing each REST and gRPC interfaces. What’s attention-grabbing is how Google has structured these APIs to take care of consistency throughout fashions whereas permitting entry to model-specific options. You aren’t simply calling completely different endpoints – you might be tapping right into a unified system the place fashions can work collectively.

Google Cloud integration goes deeper than most understand. Past primary API entry, you get instruments for monitoring, scaling, and managing your AI workloads. The true energy comes from how Gemini fashions combine with different Google Cloud companies – from BigQuery for knowledge evaluation to Cloud Storage for dealing with giant contexts.

Workspace implementation exhibits explicit promise for enterprise customers. Google has woven Gemini capabilities into acquainted instruments like Docs and Sheets, however with a twist – you possibly can select which mannequin powers completely different options. Want fast formatting strategies? Flash handles that. Complicated knowledge evaluation? Professional steps in.

The cellular expertise deserves particular consideration. Google’s app is a testbed for a way these fashions can work collectively in real-time. You possibly can change between fashions mid-conversation, every optimized for various elements of your process.

For builders, the tooling ecosystem continues to develop. SDKs can be found for main languages, and Google has created specialised instruments for frequent integration patterns. What is especially helpful is how the documentation adapts primarily based in your use case – whether or not you might be constructing a chat interface, knowledge evaluation instrument, or code assistant.

The Backside Line

Wanting forward, anticipate to see this ecosystem proceed to evolve. Google’s funding in specialised fashions reinforces a future the place AI turns into extra task-specific moderately than general-purpose. Look ahead to elevated integration between fashions and increasing capabilities in every specialised space.

The strategic takeaway isn’t about selecting winners – it’s about constructing methods that may adapt as these instruments evolve. Success with Gemini 2.0 comes from understanding not simply what these fashions can do immediately, however how they match into your longer-term AI technique.

For builders and organizations diving into this ecosystem, the bottom line is beginning small however considering huge. Start with centered implementations that clear up particular issues. Study from actual utilization patterns. Construct flexibility into your methods. And most significantly, keep curious – we’re nonetheless within the early chapters of what these fashions can do.

FAQs

1. Is Gemini 2.0 obtainable?

Sure, Gemini 2.0 is out there. The Gemini 2.0 mannequin suite is broadly accessible by way of the Gemini chat app and Google Cloud’s Vertex AI platform. Gemini 2.0 Flash is usually obtainable, Flash-Lite is in public preview, and Gemini 2.0 Professional is in experimental preview.

2. What are the principle options of Gemini 2.0?

Gemini 2.0’s key options embrace multimodal skills (textual content and picture enter), a big context window (1M-2M tokens), superior reasoning (particularly with Flash Considering), integration with Google companies (Search, Maps, YouTube), sturdy pure language processing capabilities, and scalability by way of fashions like Flash and Flash-Lite.

3. Is Gemini pretty much as good as GPT-4?

Gemini 2.0 is taken into account on par with GPT-4, surpassing it in some areas. Google stories that its largest Gemini mannequin outperforms GPT-4 on 30 out of 32 educational benchmarks. Group evaluations additionally rank Gemini fashions extremely. For on a regular basis duties, Gemini 2.0 Flash and GPT-4 carry out equally, with the selection relying on particular wants or ecosystem choice.

4. Is Gemini 2.0 secure to make use of?

Sure, Google has applied security measures in Gemini 2.0, together with reinforcement studying and fine-tuning to scale back dangerous outputs. Google’s AI ideas information its coaching, avoiding biased responses and disallowed content material. Automated safety testing probes for vulnerabilities. Consumer-facing functions have guardrails to filter inappropriate requests, making certain secure common use.

5. What does Gemini 2.0 Flash do?

Gemini 2.0 Flash is the core mannequin designed for fast and environment friendly process dealing with. It processes prompts, generates responses, causes, gives info, and creates textual content quickly. Optimized for low latency and excessive throughput, it is splendid for interactive use, similar to chatbots.