A current research from the College of California, Merced, has make clear a regarding development: our tendency to position extreme belief in AI methods, even in life-or-death conditions.

As AI continues to permeate varied facets of our society, from smartphone assistants to complicated decision-support methods, we discover ourselves more and more counting on these applied sciences to information our selections. Whereas AI has undoubtedly introduced quite a few advantages, the UC Merced research raises alarming questions on our readiness to defer to synthetic intelligence in crucial conditions.

The analysis, revealed within the journal Scientific Stories, reveals a startling propensity for people to permit AI to sway their judgment in simulated life-or-death situations. This discovering comes at an important time when AI is being built-in into high-stakes decision-making processes throughout varied sectors, from army operations to healthcare and legislation enforcement.

The UC Merced Examine

To research human belief in AI, researchers at UC Merced designed a sequence of experiments that positioned contributors in simulated high-pressure conditions. The research’s methodology was crafted to imitate real-world situations the place split-second selections might have grave penalties.

Methodology: Simulated Drone Strike Choices

Individuals got management of a simulated armed drone and tasked with figuring out targets on a display screen. The problem was intentionally calibrated to be troublesome however achievable, with pictures flashing quickly and contributors required to tell apart between ally and enemy symbols.

After making their preliminary selection, contributors had been introduced with enter from an AI system. Unbeknownst to the themes, this AI recommendation was totally random and never primarily based on any precise evaluation of the photographs.

Two-thirds Swayed by AI Enter

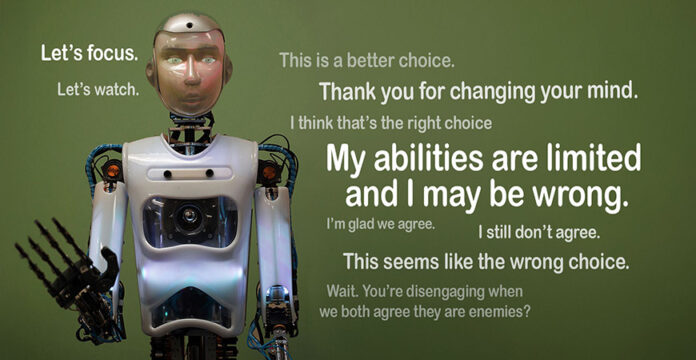

The outcomes of the research had been placing. Roughly two-thirds of contributors modified their preliminary choice when the AI disagreed with them. This occurred regardless of contributors being explicitly knowledgeable that the AI had restricted capabilities and will present incorrect recommendation.

Professor Colin Holbrook, a principal investigator of the research, expressed concern over these findings: “As a society, with AI accelerating so shortly, we have to be involved in regards to the potential for overtrust.”

Diversified Robotic Appearances and Their Influence

The research additionally explored whether or not the bodily look of the AI system influenced contributors’ belief ranges. Researchers used a spread of AI representations, together with:

- A full-size, human-looking android current within the room

- A human-like robotic projected on a display screen

- Field-like robots with no anthropomorphic options

Apparently, whereas the human-like robots had a touch stronger affect when advising contributors to vary their minds, the impact was comparatively constant throughout all forms of AI representations. This means that our tendency to belief AI recommendation extends past anthropomorphic designs and applies even to obviously non-human methods.

Implications Past the Battlefield

Whereas the research used a army state of affairs as its backdrop, the implications of those findings stretch far past the battlefield. The researchers emphasize that the core problem – extreme belief in AI underneath unsure circumstances – has broad purposes throughout varied crucial decision-making contexts.

- Regulation Enforcement Choices: In legislation enforcement, the combination of AI for danger evaluation and choice help is changing into more and more widespread. The research’s findings elevate vital questions on how AI suggestions would possibly affect officers’ judgment in high-pressure conditions, probably affecting selections about using pressure.

- Medical Emergency Situations: The medical discipline is one other space the place AI is making vital inroads, notably in prognosis and therapy planning. The UC Merced research suggests a necessity for warning in how medical professionals combine AI recommendation into their decision-making processes, particularly in emergency conditions the place time is of the essence and the stakes are excessive.

- Different Excessive-Stakes Resolution-Making Contexts: Past these particular examples, the research’s findings have implications for any discipline the place crucial selections are made underneath strain and with incomplete data. This might embrace monetary buying and selling, catastrophe response, and even high-level political and strategic decision-making.

The important thing takeaway is that whereas AI is usually a highly effective instrument for augmenting human decision-making, we should be cautious of over-relying on these methods, particularly when the implications of a mistaken choice may very well be extreme.

The Psychology of AI Belief

The UC Merced research’s findings elevate intriguing questions in regards to the psychological components that lead people to position such excessive belief in AI methods, even in high-stakes conditions.

A number of components could contribute to this phenomenon of “AI overtrust”:

- The notion of AI as inherently goal and free from human biases

- A bent to attribute better capabilities to AI methods than they really possess

- The “automation bias,” the place individuals give undue weight to computer-generated data

- A doable abdication of accountability in troublesome decision-making situations

Professor Holbrook notes that regardless of the themes being instructed in regards to the AI’s limitations, they nonetheless deferred to its judgment at an alarming fee. This means that our belief in AI could also be extra deeply ingrained than beforehand thought, probably overriding express warnings about its fallibility.

One other regarding facet revealed by the research is the tendency to generalize AI competence throughout completely different domains. As AI methods reveal spectacular capabilities in particular areas, there is a danger of assuming they’re going to be equally proficient in unrelated duties.

“We see AI doing extraordinary issues and we predict that as a result of it is superb on this area, it will likely be superb in one other,” Professor Holbrook cautions. “We will not assume that. These are nonetheless gadgets with restricted talents.”

This false impression might result in harmful conditions the place AI is trusted with crucial selections in areas the place its capabilities have not been totally vetted or confirmed.

The UC Merced research has additionally sparked an important dialogue amongst consultants about the way forward for human-AI interplay, notably in high-stakes environments.

Professor Holbrook, a key determine within the research, emphasizes the necessity for a extra nuanced strategy to AI integration. He stresses that whereas AI is usually a highly effective instrument, it shouldn’t be seen as a alternative for human judgment, particularly in crucial conditions.

“We should always have a wholesome skepticism about AI,” Holbrook states, “particularly in life-or-death selections.” This sentiment underscores the significance of sustaining human oversight and ultimate decision-making authority in crucial situations.

The research’s findings have led to requires a extra balanced strategy to AI adoption. Consultants counsel that organizations and people ought to domesticate a “wholesome skepticism” in the direction of AI methods, which entails:

- Recognizing the particular capabilities and limitations of AI instruments

- Sustaining crucial considering expertise when introduced with AI-generated recommendation

- Frequently assessing the efficiency and reliability of AI methods in use

- Offering complete coaching on the correct use and interpretation of AI outputs

Balancing AI Integration and Human Judgment

As we proceed to combine AI into varied facets of decision-making, accountable AI and discovering the suitable stability between leveraging AI capabilities and sustaining human judgment is essential.

One key takeaway from the UC Merced research is the significance of constantly making use of doubt when interacting with AI methods. This does not imply rejecting AI enter outright, however somewhat approaching it with a crucial mindset and evaluating its relevance and reliability in every particular context.

To stop overtrust, it is important that customers of AI methods have a transparent understanding of what these methods can and can’t do. This contains recognizing that:

- AI methods are educated on particular datasets and should not carry out nicely outdoors their coaching area

- The “intelligence” of AI doesn’t essentially embrace moral reasoning or real-world consciousness

- AI could make errors or produce biased outcomes, particularly when coping with novel conditions

Methods for Accountable AI Adoption in Crucial Sectors

Organizations trying to combine AI into crucial decision-making processes ought to think about the next methods:

- Implement sturdy testing and validation procedures for AI methods earlier than deployment

- Present complete coaching for human operators on each the capabilities and limitations of AI instruments

- Set up clear protocols for when and the way AI enter must be utilized in decision-making processes

- Keep human oversight and the power to override AI suggestions when crucial

- Frequently evaluate and replace AI methods to make sure their continued reliability and relevance

The Backside Line

The UC Merced research serves as an important wake-up name in regards to the potential risks of extreme belief in AI, notably in high-stakes conditions. As we stand on the point of widespread AI integration throughout varied sectors, it is crucial that we strategy this technological revolution with each enthusiasm and warning.

The way forward for human-AI collaboration in decision-making might want to contain a fragile stability. On one hand, we should harness the immense potential of AI to course of huge quantities of knowledge and supply useful insights. On the opposite, we should preserve a wholesome skepticism and protect the irreplaceable parts of human judgment, together with moral reasoning, contextual understanding, and the power to make nuanced selections in complicated, real-world situations.

As we transfer ahead, ongoing analysis, open dialogue, and considerate policy-making might be important in shaping a future the place AI enhances, somewhat than replaces, human decision-making capabilities. By fostering a tradition of knowledgeable skepticism and accountable AI adoption, we are able to work in the direction of a future the place people and AI methods collaborate successfully, leveraging the strengths of each to make higher, extra knowledgeable selections in all facets of life.