AI represents the best cognitive offloading within the historical past of humanity. We as soon as offloaded reminiscence to writing, arithmetic to calculators and navigation to GPS. Now we’re starting to dump judgment, synthesis and even meaning-making to methods that talk our language, study our habits and tailor our truths.

AI methods are rising more and more adept at recognizing our preferences, our biases, even our peccadillos. Like attentive servants in a single occasion or refined manipulators in one other, they tailor their responses to please, to influence, to help or just to carry our consideration.

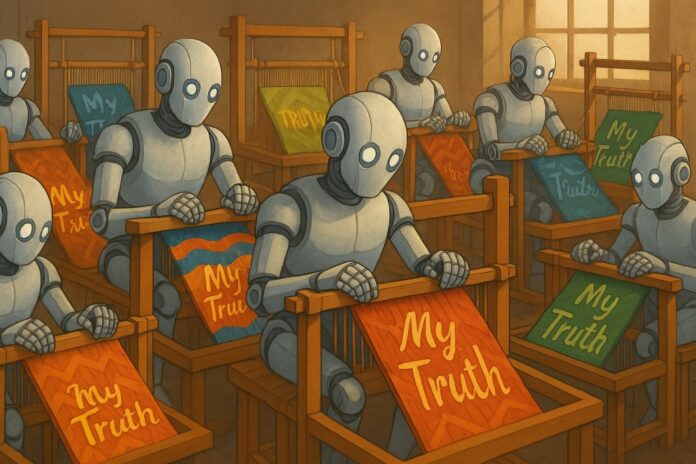

Whereas the speedy results could appear benign, on this quiet and invisible tuning lies a profound shift: The model of actuality every of us receives turns into progressively extra uniquely tailor-made. By this course of, over time, every particular person turns into more and more their very own island. This divergence might threaten the coherence and stability of society itself, eroding our potential to agree on primary info or navigate shared challenges.

AI personalization doesn’t merely serve our wants; it begins to reshape them. The results of this reshaping is a sort of epistemic drift. Every particular person begins to maneuver, inch by inch, away from the widespread floor of shared information, shared tales and shared info, and additional into their very own actuality.

This isn’t merely a matter of various information feeds. It’s the sluggish divergence of ethical, political and interpersonal realities. On this method, we could also be witnessing the unweaving of collective understanding. It’s an unintended consequence, but deeply vital exactly as a result of it’s unexpected. However this fragmentation, whereas now accelerated by AI, started lengthy earlier than algorithms formed our feeds.

The unweaving

This unweaving didn’t start with AI. As David Brooks mirrored in The Atlantic, drawing on the work of thinker Alasdair MacIntyre, our society has been drifting away from shared ethical and epistemic frameworks for hundreds of years. Because the Enlightenment, we’ve got regularly changed inherited roles, communal narratives and shared moral traditions with particular person autonomy and private choice.

What started as liberation from imposed perception methods has, over time, eroded the very buildings that when tethered us to widespread goal and private which means. AI didn’t create this fragmentation. However it’s giving new type and pace to it, customizing not solely what we see however how we interpret and consider.

It’s not not like the biblical story of Babel. A unified humanity as soon as shared a single language, solely to be fractured, confused and scattered by an act that made mutual understanding all however unimaginable. At present, we aren’t constructing a tower product of stone. We’re constructing a tower of language itself. As soon as once more, we threat the autumn.

Human-machine bond

At first, personalization was a method to enhance “stickiness” by conserving customers engaged longer, returning extra typically and interacting extra deeply with a web site or service. Suggestion engines, tailor-made advertisements and curated feeds had been all designed to maintain our consideration just a bit longer, maybe to entertain however typically to maneuver us to buy a product. However over time, the aim has expanded. Personalization is now not nearly what holds us. It’s what it is aware of about every of us, the dynamic graph of our preferences, beliefs and behaviors that turns into extra refined with each interplay.

At present’s AI methods don’t merely predict our preferences. They purpose to create a bond by means of extremely personalised interactions and responses, creating a way that the AI system understands and cares in regards to the consumer and helps their uniqueness. The tone of a chatbot, the pacing of a reply and the emotional valence of a suggestion are calibrated not just for effectivity however for resonance, pointing towards a extra useful period of expertise. It shouldn’t be shocking that some individuals have even fallen in love and married their bots.

The machine adapts not simply to what we click on on, however to who we look like. It displays us again to ourselves in ways in which really feel intimate, even empathic. A current analysis paper cited in Nature refers to this as “socioaffective alignment,” the method by which an AI system participates in a co-created social and psychological ecosystem, the place preferences and perceptions evolve by means of mutual affect.

This isn’t a impartial improvement. When each interplay is tuned to flatter or affirm, when methods mirror us too properly, they blur the road between what resonates and what’s actual. We aren’t simply staying longer on the platform; we’re forming a relationship. We’re slowly and maybe inexorably merging with an AI-mediated model of actuality, one that’s more and more formed by invisible choices about what we are supposed to consider, need or belief.

This course of is just not science fiction; its structure is constructed on consideration, reinforcement studying with human suggestions (RLHF) and personalization engines. Additionally it is occurring with out many people — probably most of us — even realizing. Within the course of, we achieve AI “mates,” however at what value? What can we lose, particularly when it comes to free will and company?

Creator and monetary commentator Kyla Scanlon spoke on the Ezra Klein podcast about how the frictionless ease of the digital world could come at the price of which means. As she put it: “When issues are a little bit too straightforward, it’s robust to search out which means in it… For those who’re capable of lay again, watch a display screen in your little chair and have smoothies delivered to you — it’s robust to search out which means inside that sort of WALL-E way of life as a result of every little thing is only a bit too easy.”

The personalization of reality

As AI methods reply to us with ever higher fluency, additionally they transfer towards growing selectivity. Two customers asking the identical query immediately would possibly obtain comparable solutions, differentiated principally by the probabilistic nature of generative AI. But that is merely the start. Rising AI methods are explicitly designed to adapt their responses to particular person patterns, regularly tailoring solutions, tone and even conclusions to resonate most strongly with every consumer.

Personalization is just not inherently manipulative. However it turns into dangerous when it’s invisible, unaccountable or engineered extra to influence than to tell. In such instances, it doesn’t simply mirror who we’re; it steers how we interpret the world round us.

Because the Stanford Middle for Analysis on Basis Fashions notes in its 2024 transparency index, few main fashions disclose whether or not their outputs differ by consumer identification, historical past or demographics, though the technical scaffolding for such personalization is more and more in place and solely starting to be examined. Whereas not but totally realized throughout public platforms, this potential to form responses primarily based on inferred consumer profiles, leading to more and more tailor-made informational worlds, represents a profound shift that’s already being prototyped and actively pursued by main firms.

This personalization will be useful, and positively that’s the hope of these constructing these methods. Personalised tutoring reveals promise in serving to learners progress at their very own tempo. Psychological well being apps more and more tailor responses to help particular person wants, and accessibility instruments alter content material to satisfy a variety of cognitive and sensory variations. These are actual features.

But when comparable adaptive strategies change into widespread throughout info, leisure and communication platforms, a deeper, extra troubling shift looms forward: A change from shared understanding towards tailor-made, particular person realities. When reality itself begins to adapt to the observer, it turns into fragile and more and more fungible. As a substitute of disagreements primarily based totally on differing values or interpretations, we might quickly discover ourselves struggling merely to inhabit the identical factual world.

In fact, reality has all the time been mediated. In earlier eras, it handed by means of the arms of clergy, lecturers, publishers and night information anchors who served as gatekeepers, shaping public understanding by means of institutional lenses. These figures had been actually not free from bias or agenda, but they operated inside broadly shared frameworks.

At present’s rising paradigm guarantees one thing qualitatively completely different: AI-mediated reality by means of personalised inference that frames, filters and presents info, shaping what customers come to consider. However not like previous mediators who, regardless of flaws, operated inside publicly seen establishments, these new arbiters are commercially opaque, unelected and consistently adapting, typically with out disclosure. Their biases are usually not doctrinal however encoded by means of coaching information, structure and unexamined developer incentives.

The shift is profound, from a typical narrative filtered by means of authoritative establishments to probably fractured narratives that mirror a brand new infrastructure of understanding, tailor-made by algorithms to the preferences, habits and inferred beliefs of every consumer. If Babel represented the collapse of a shared language, we could now stand on the threshold of the collapse of shared mediation.

If personalization is the brand new epistemic substrate, what would possibly reality infrastructure seem like in a world with out mounted mediators? One chance is the creation of AI public trusts, impressed by a proposal from authorized scholar Jack Balkin, who argued that entities dealing with consumer information and shaping notion needs to be held to fiduciary requirements of loyalty, care and transparency.

AI fashions may very well be ruled by transparency boards, educated on publicly funded information units and required to indicate reasoning steps, alternate views or confidence ranges. These “info fiduciaries” wouldn’t remove bias, however they might anchor belief in course of fairly than purely in personalization. Builders can start by adopting clear “constitutions” that clearly outline mannequin conduct, and by providing chain-of-reasoning explanations that permit customers see how conclusions are formed. These are usually not silver bullets, however they’re instruments that assist maintain epistemic authority accountable and traceable.

AI builders face a strategic and civic inflection level. They aren’t simply optimizing efficiency; they’re additionally confronting the chance that personalised optimization could fragment shared actuality. This calls for a brand new sort of duty to customers: Designing methods that respect not solely their preferences, however their position as learners and believers.

Unraveling and reweaving

What we could also be dropping is just not merely the idea of reality, however the path by means of which we as soon as acknowledged it. Up to now, mediated reality — though imperfect and biased — was nonetheless anchored in human judgment and, typically, solely a layer or two faraway from the lived expertise of different people whom you knew or might not less than relate to.

At present, that mediation is opaque and pushed by algorithmic logic. And, whereas human company has lengthy been slipping, we now threat one thing deeper, the lack of the compass that when informed us once we had been astray. The hazard is just not solely that we’ll consider what the machine tells us. It’s that we’ll overlook how we as soon as found the reality for ourselves. What we threat dropping is not only coherence, however the will to hunt it. And with that, a deeper loss: The habits of discernment, disagreement and deliberation that when held pluralistic societies collectively.

If Babel marked the shattering of a typical tongue, our second dangers the quiet fading of shared actuality. Nevertheless, there are methods to sluggish and even to counter the drift. A mannequin that explains its reasoning or reveals the boundaries of its design could do greater than make clear output. It might assist restore the circumstances for shared inquiry. This isn’t a technical repair; it’s a cultural stance. Fact, in any case, has all the time depended not simply on solutions, however on how we arrive at them collectively.